AI Does Not Solve Ambiguity. It Scales It.

Ryan Riccucci recently posed a deceptively simple question: what do we actually mean when we say “C-UAS mission”?

It is a useful question precisely because it exposes a familiar enterprise problem. The same phrase can point to very different realities depending on who is using it. For one stakeholder, it may describe an operational mission. For another, a technology problem. For another, a program of record. For another, a policy domain. For another still, a threat category.

The words remain the same. The implied meaning does not.

That matters more than it may seem. Because once a term begins carrying multiple meanings at once, ambiguity does not stay neatly contained in conversation. It propagates into requirements, funding, governance, acquisition decisions, workflows, and operational execution. And when that ambiguity is not resolved upstream, it gets absorbed downstream by the operator.

This is why semantics matter.

Not as an academic exercise. Not as a metadata clean-up effort. And not as a technical layer added after the fact. Semantics matter because complex organizations cannot coordinate action reliably when the meaning of their core concepts remains implicit, unstable, or contested.

Too often, enterprises assume that shared vocabulary means shared understanding. It does not. Shared words are frequently standing in for different mental models, different institutional incentives, and different operational assumptions. That may be manageable in a meeting, but it becomes much more costly when translated into systems, interfaces, analytics, and automation.

This is where many AI conversations still miss the point.

There is a growing tendency to treat AI as though it can reason its way across fragmented data, inconsistent terminology, and unresolved enterprise meaning. The assumption is that if enough information is made available to a model, it will somehow synthesize clarity out of ambiguity. In practice, the opposite is often true.

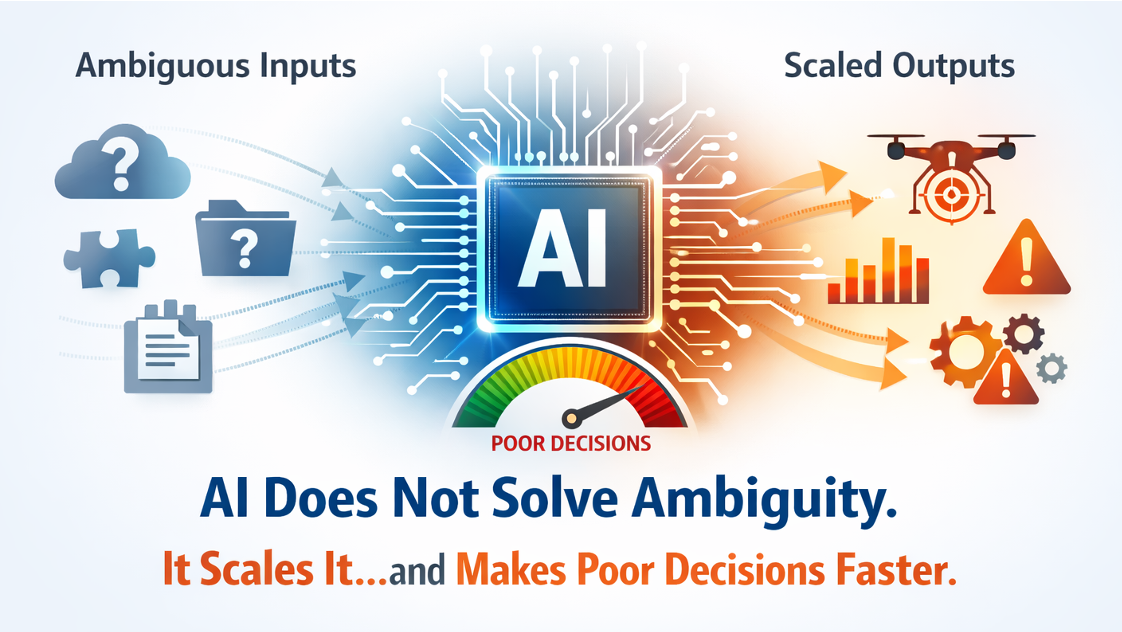

AI does not solve ambiguity. It scales it.

AI does not resolve unclear meaning — it amplifies it, accelerating flawed assumptions into faster, larger-scale decisions…and associated outcomes.

Garbage in = Garbage out … at AI speed.

If the enterprise has not established what a concept actually is, what relationships define it, what authorities govern it, what evidence supports it, and what actions are permitted in relation to it, then AI will not repair that gap. It will operate on whatever context it is given – partial, local, inconsistent, or poorly governed – and do so at machine speed.

That can make the output appear sophisticated while leaving the underlying meaning unresolved. In some cases, it can make the problem worse by giving ambiguity the appearance of precision.

This is one reason Gartner’s recent emphasis on context engineering is so important. The shift from prompt engineering to context engineering reflects a broader realization that better AI outcomes do not come primarily from clever prompts. They come from the quality, structure, governance, and relevance of the context surrounding the model. That includes the relationships among data, the policy boundaries that shape action, the lineage and provenance that establish trust, and the memory structures that preserve continuity over time.

That is, in effect, a recognition that meaning must be engineered. And that is exactly where a durable, machine-understandable semantic substrate becomes essential.

The problem facing most organizations is not a lack of data. It is the lack of a durable way to represent meaning, so it survives across systems, organizations, and time. In the absence of that foundation, meaning gets recreated locally inside applications, interfaces, spreadsheets, and human interpretation. Every integration becomes more brittle than it appears because what is being connected is not just data, but differing assumptions about what the data refers to.

This is why the Knowledge-Centric Engineering Framework matters.

KCEF begins from a premise that should be obvious, but too often is not: shared vocabulary is not enough. Enterprises need a durable semantic foundation that makes concepts explicit, preserves context, governs relationships, and binds action to policy, authority, and evidence. Without that, interoperability remains shallow, automation remains fragile, and governance becomes reactive.

A durable semantic substrate changes the equation. It allows the enterprise to distinguish between the mission, the capability, the program, the threat, and the governing authority without collapsing them into one overloaded term. It provides stable meaning that workflows, systems, and analytics can connect to, even as tools change, schemas drift, and organizations evolve.

That is what makes interoperability durable rather than incidental. It is also what makes automation governable.

As AI becomes more deeply embedded in workflows and decision support, the need for explicit, machine understandable meaning only increases. Systems cannot act responsibly on implicit assumptions. They cannot reliably coordinate across institutional boundaries if the concepts they are using have never been formally aligned. And they certainly cannot support bounded autonomy if the boundaries themselves are not represented in a way machines can interpret.

This is the real significance of the C-UAS example. The issue is not simply that a phrase may be interpreted differently by different communities. The issue is that enterprises routinely build programs, architectures, and increasingly AI-enabled workflows on top of terms whose meanings have never been made explicit enough to support coordinated action.

That is not a language problem. It is an execution problem. And in the age of AI, execution problems scale faster.

If the semantic foundation is weak, AI will amplify confusion, inconsistency, and policy risk. If the semantic foundation is durable, AI can operate with greater relevance, better alignment, and clearer constraints. In that environment, automation becomes more than impressive. It becomes trustworthy. It can assist aggressively where appropriate while remaining bounded by authority, validation criteria, and traceability.

The broader lesson is not limited to C-UAS. Every complex enterprise has its own version of this problem: terms everyone uses, few define, and systems cannot reliably interpret. These are often the most dangerous terms because they create the illusion of alignment while masking divergence underneath.

AI will not fix that. But a durable semantic substrate can.

That is why semantics should not be treated as a technical afterthought to AI. They are part of the precondition for making AI useful, governable, and operationally credible. Before systems can reason well, act well, or interoperate well, they must be grounded in meaning that is explicit, durable, and machine-understandable.

Shared words are not shared meaning. And if meaning is not engineered upstream, ambiguity will be scaled downstream.

Further reading

Our approach to engineering coordination-first systems: https://crownpoint.tech/kcef-explained

(Optional deep dives) Meaning layer • Execution fabric • Orchestration • Goal-oriented UX