Agent Orchestration: From Semantics to Governed Autonomy

In a previous article, we argued that large language models don’t suffer from a lack of data; they suffer from a lack of structure. The industry’s instinct has been to add more text: longer prompts, larger context windows, more retrieval. But token overload is not solved by increasing tokens. It is solved by introducing semantics: shared, durable meaning that reduces ambiguity rather than amplifying it.

In the second article of a series, we took that argument further. Semantics, expressed through ontologies and knowledge graphs, are not just a modeling exercise. They form the interoperability layer required for knowledge-governed execution. When meaning is explicit and policy is encoded, systems can move from probabilistic suggestion to bounded, reliable action.

This brings us to the next logical step.

If semantics provide shared meaning, and knowledge governance provides structure and constraint, then who – or what – acts?

The answer increasingly is…agents.

But not in the way the current hype cycle suggests.

The Return of the Agent

“Agent” has become one of the most overloaded terms in AI. It is used to describe everything from a chatbot

with tool access to complex multi-agent frameworks coordinating across services.

Yet beneath the noise lies a deeper architectural question: What does it mean for a system to act autonomously?

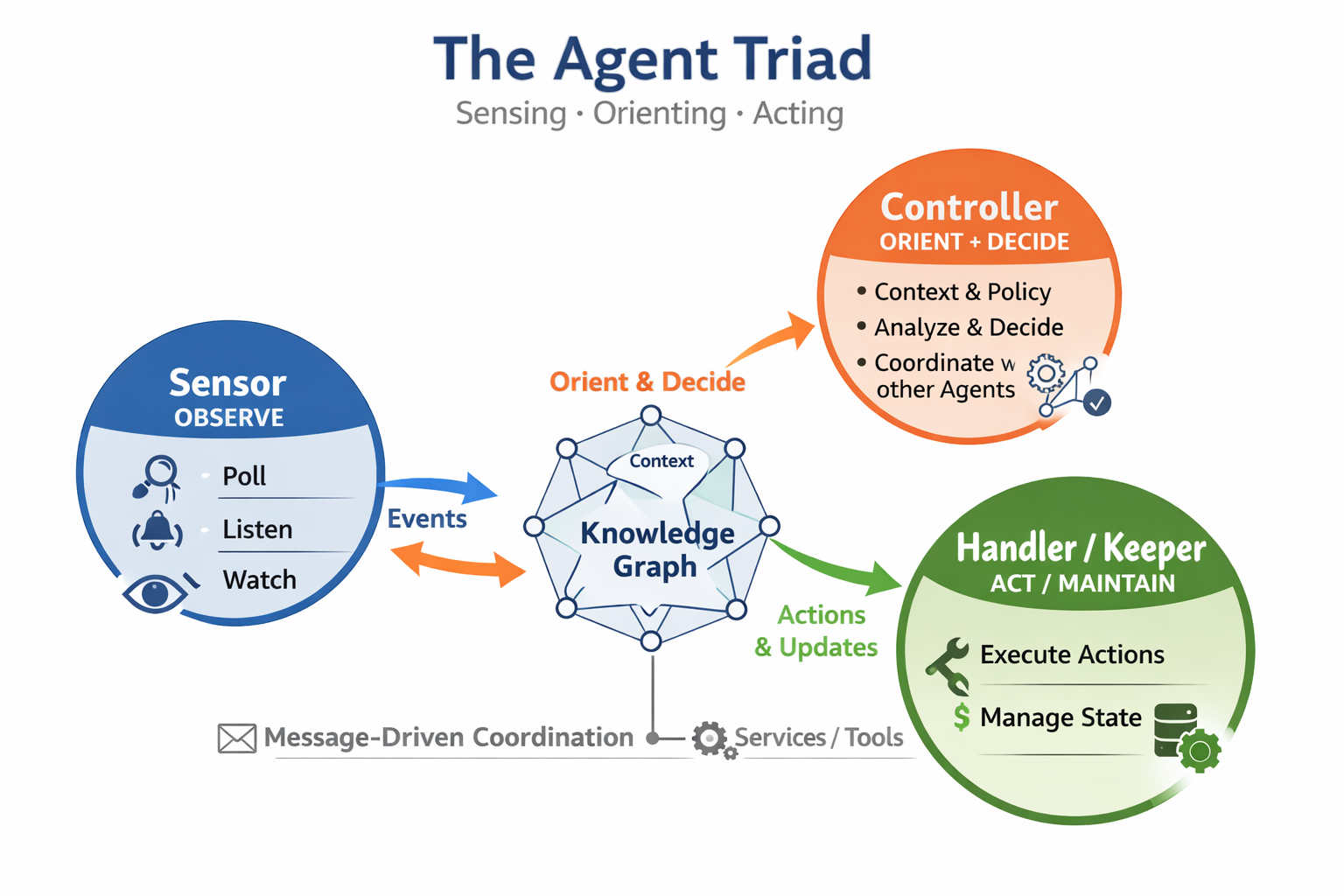

Long before LLMs, agent research grappled with this question. Autonomy was never defined merely as reasoning. It required the integration of knowledge, policy, and execution capability. In a recent restatement of the Agent Triad for modern systems, we revisited this principle: agents are not just reasoning engines; they are actors operating within structured meaning and constraint. That foundation becomes even more critical in today’s distributed, AI-driven environments.

That framing matters today more than ever.

Modern LLM-based agents are extraordinarily capable at generating plans, decomposing goals, and invoking tools. But most operate over loosely structured text, ephemeral memory, and dynamically assembled prompts. They reason statistically about what might be appropriate. They do not operate over a governed representation of what is true, permitted, or aligned to mission.

Without that grounding, autonomy becomes improvisation.

From Reasoning Engines to Governed Actors

The first article demonstrated that text alone is an unstable reasoning substrate. The second showed that semantics stabilize meaning across systems.

Agent orchestration is where those insights meet execution.

When agents operate directly on tokens, their coordination depends on prompt conventions and probabilistic alignment. One agent interprets a goal slightly differently than another. A tool is selected based on surface similarity rather than structural fit. Constraints are implied rather than enforced. The system may function, but it drifts. It is difficult to audit, difficult to verify, and difficult to trust at scale.

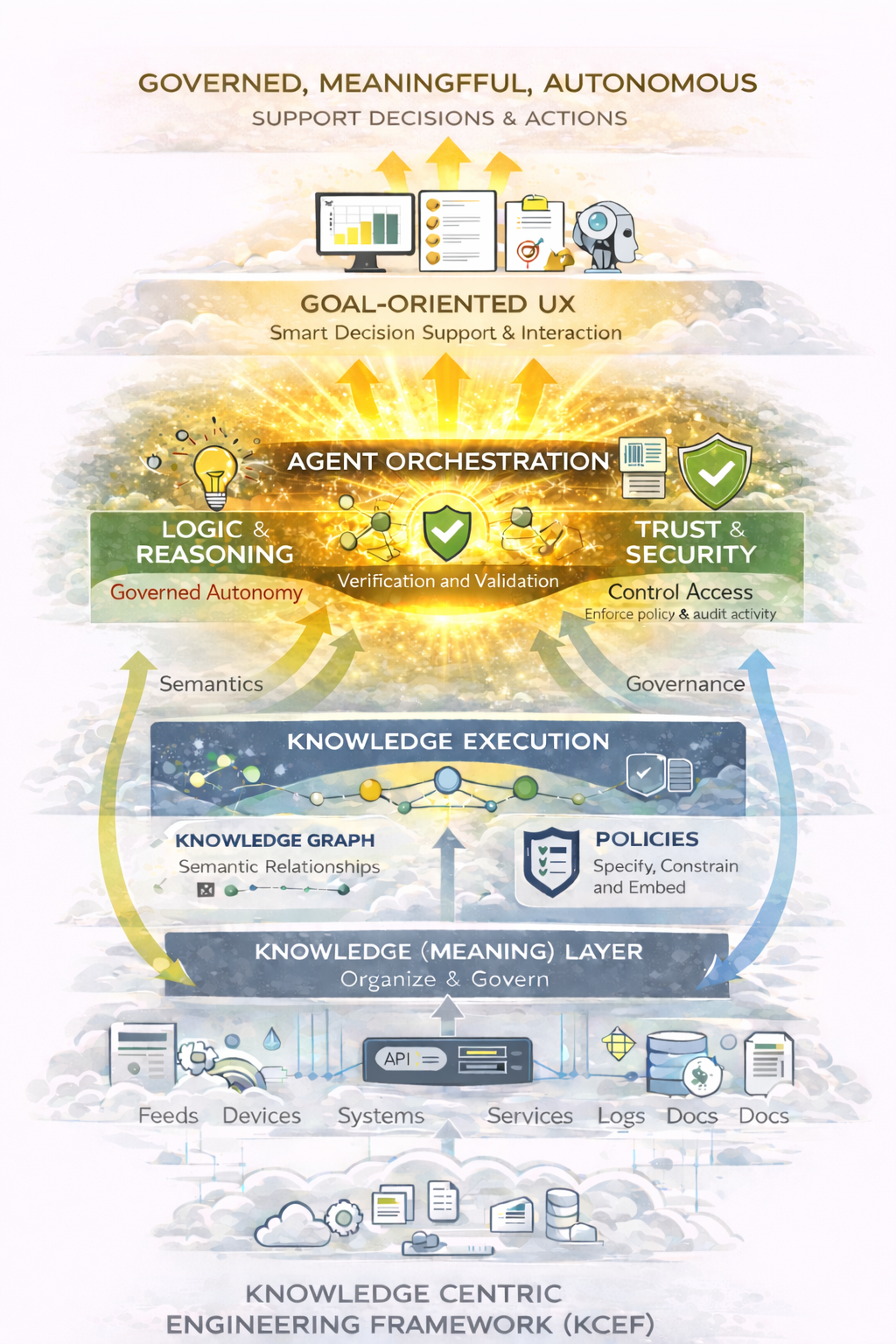

When agents operate within a knowledge-centric architecture, something changes.

Meaning is not inferred on the fly; it is defined

Relationships are not guessed; they are modeled

Policies are not remembered; they are encoded

Agents no longer invent their operating context. They inherit it.

This is the difference between an agent that “figures things out” and an agent that participates in a governed execution fabric.

Orchestration Is the Real Innovation

It is tempting to think the breakthrough is better reasoning models. In reality, the more consequential shift is architectural.

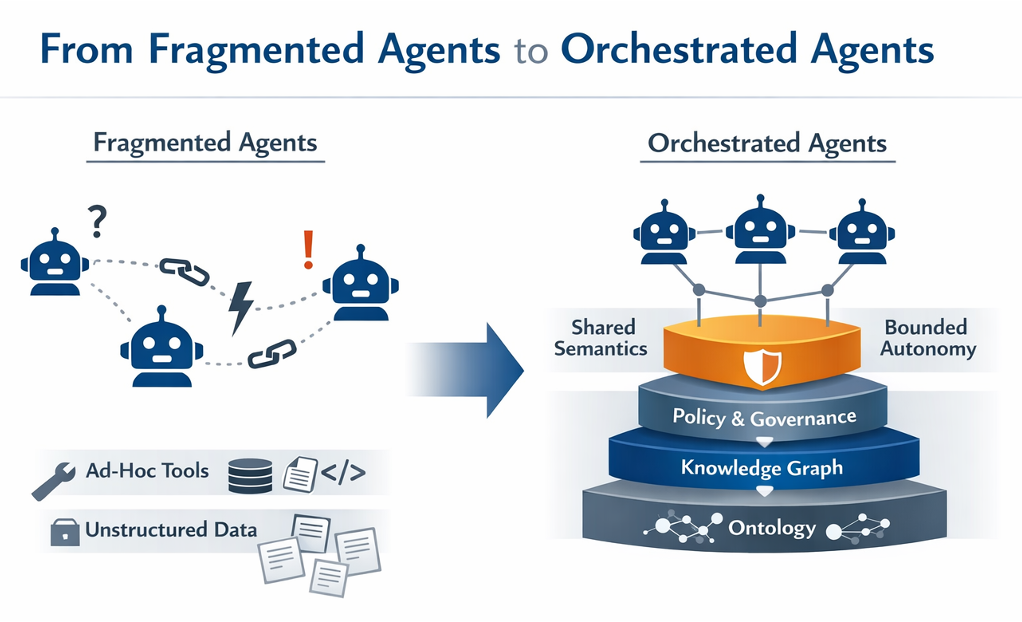

Orchestration is not simply task routing or API chaining. True orchestration occurs when multiple agents operate within a shared semantic and policy framework, decomposing goals in a way that is consistent with mission intent and organizational constraints.

From Fragmented Agents to Orchestrated Agents: Agents operating over unstructured data and ad hoc integrations produce inconsistency and drift. When grounded in shared semantics, knowledge graphs, and policy governance, agents achieve bounded autonomy within a coherent execution fabric.

The illustration contrasts two fundamentally different architectural patterns. On the left, fragmented agents operate independently, connected only through brittle links and ad hoc tooling layered over unstructured data. Coordination is implied rather than governed, resulting in ambiguity, inconsistency, and unpredictable behavior. On the right, agents operate within a structured stack built on ontology, knowledge graphs, and policy governance. Shared semantics align interpretation, policy bounds constrain action, and orchestration provides coordination. The visual emphasizes that the difference between unreliable automation and trustworthy autonomy is not model intelligence, but architectural foundation.

In this model, the architecture provides:

A common ontology that defines entities, roles, and relationships

A knowledge graph that represents state and context

Policy mechanisms that constrain permissible actions

Supervisory layers that enable human governance

Agents operate inside this fabric. They reason about goals, select actions, and coordinate with one another, but always within boundaries that are explicit and inspectable.

This produces something we have not previously had at scale: bounded autonomy.

Bounded Autonomy and Trust

Full autonomy is often portrayed as the objective. In enterprise and mission environments, that is neither realistic nor desirable.

What is required is bounded autonomy, i.e., the ability for agents to adapt, decompose objectives, and act within clearly defined semantic and policy constraints.

Bounded autonomy enables:

Predictable behavior within known domains

Escalation when constraints are exceeded

Auditable state transitions

Alignment to organizational objectives rather than local optimizations

Trust does not emerge from larger models or longer prompts. It emerges from structural alignment between knowledge, policy, and action.

This has been a consistent principle in agent systems for decades. What is different today is that we finally have the computational infrastructure – LLM reasoning, scalable knowledge graphs, cloud-native orchestration – to implement it in operational environments.

The Human Role Evolves

In app-centric systems, humans manually orchestrate workflows. We integrate tools, move data between systems, and resolve ambiguities through experience.

In ungoverned agent systems, humans become reactive supervisors, stepping in when stochastic behavior produces unexpected outcomes.

In knowledge-governed, agent-orchestrated systems, humans move up a layer.

They define intent

They encode policy

They monitor alignment

They intervene at meaningful abstraction boundaries

The system handles structured decomposition and execution. The human governs.

This is not the removal of humans from the loop. It is the elevation of humans within it.

The Architectural Progression

The progression across these three articles is deliberate.

Token overload exposed the limits of unstructured reasoning

Semantics introduced a durable meaning layer

Knowledge governance transformed meaning into bounded execution

Agent orchestration operationalizes autonomy within that governed environment

The future is not agent-first

It is architecture-first, with agents as participants in a knowledge-centric execution fabric.

When agents operate without shared semantics, they improvise

When they operate without policy, they drift

When they operate without orchestration, they fragment

But when they operate within a governed semantic architecture, they become something far more powerful: adaptive actors aligned to mission and business outcomes.

The technology is finally catching up to ideas that have been maturing for decades. The question now is not whether agents can act; it’s whether we will design the architecture that ensures they act responsibly.

Architecture First, Agents Second

The progression across these three articles has been deliberate.

Token overload exposed the limits of unstructured reasoning

Semantics introduced a durable meaning layer

Knowledge governance transformed meaning into bounded execution

Agent orchestration operationalizes autonomy within that governed environment

But there is one more shift required; and it is the one users will feel most directly.

If agents can decompose goals and act across a knowledge-governed fabric, then the user experience itself must evolve. We should no longer require users to navigate applications transaction by transaction, stitching together workflows across disconnected systems. Instead, users should operate at the level of intent: expressing outcomes rather than clicking through tools.

In the next article, we will explore the transition from transactional, app-centric interaction to goal-centric experience, and what it takes architecturally to support systems that execute across capabilities rather than within silos.

The future is not just agent-orchestrated.

It is goal-driven.

Key takeaways

Semantics precede autonomy. Agents cannot be trusted if they operate over loosely structured text; they must act on shared, explicit meaning.

Autonomy requires architecture. True agents integrate knowledge, policy constraints, and execution capability, not just probabilistic reasoning.

Orchestration is the breakthrough. Coordinating agents within a shared semantic and governance framework is more important than building larger models.

Bounded autonomy enables trust. Policy-aware constraints, explainable state transitions, and human oversight make agent systems reliable in enterprise and mission environments.

Humans move up a layer. In knowledge-governed systems, people define intent and govern outcomes while architecture orchestrates execution.