LLMs Don’t Need More Text … They Need More Semantics

(Note: We originally published this article on LinkedIn on January 21, 2026.)

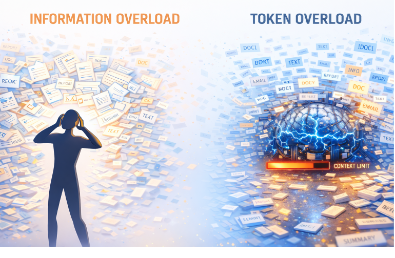

We’ve shifted from an era of human information overload — drowning in documents — to an era of token overload, where large language models are limited by fixed context windows.

It’s been exciting to see the growing adoption of what we now commonly call knowledge graph technology, particularly implementations based on W3C OWL and RDF. Anyone who has worked with these standards knows semantic technologies have historically seen slow adoption. The usual reasons come to mind: perceived complexity and concerns about scalability.

Over time – and through real-world use – we learned that a little semantics goes a long way. When paired with modern in-memory, MPP Graph OLAP architectures, knowledge graphs can now be queried at scales that were once impractical.

Another important lesson is that while top-down ontology engineering is valuable, it isn’t always required, especially initially, to deliver enterprise-scale solutions. In practice, we can automatically generate data-source (or “putative”) ontologies and link them to domain, application, or domain-independent ontologies. This approach has proven highly effective: it accelerates time-to-value while still allowing richer ontologies to evolve over time.

Equally important is OWL’s open-world assumption. Change isn’t an exception, it’s expected. This stands in sharp contrast to traditional data models and removes much of the fear associated with schema rigidity and long-term change management.

When combined with graph OLAP query engines, knowledge graphs move from niche capability to core infrastructure. Capacity becomes elastic rather than a hard constraint, and both human and automated clients can ask unanticipated questions on demand. The graph increasingly acts as an interactive reasoning substrate rather than a static data store.

So where does generative AI fit into all of this?

I tend to think of today’s GenAI systems less as “intelligence” and more as very sophisticated text summarizers. We’ve effectively shifted from an earlier era of information overload, where humans were overwhelmed by documents, to a new era of token overload, where models are constrained by bounded context windows.

As prompts grow longer, relevant information is often truncated or diluted by surrounding noise. Retrieval-augmented pipelines may return loosely related passages that are redundant, ambiguous, or even contradictory. The model is then forced to reconcile all of this implicitly within a limited reasoning workspace — sometimes successfully, sometimes not.

The underlying issue hasn’t changed: too much unstructured text competing for a bounded reasoning space.

This is where knowledge graphs matter.

By representing meaning directly – facts as triples rather than paragraphs – knowledge graphs dramatically reduce the amount of text required to convey essential information. Deterministic retrieval via SPARQL ensures that only what actually matches the question is returned. Canonical identifiers, typed relationships, and OWL/SHACL constraints resolve ambiguity and enforce guardrails before the model ever sees the data.

Just as importantly, logic is handled outside the LLM. Joins, filters, aggregations, and even inference are executed in the graph, allowing the model to focus on synthesis and explanation rather than simulating reasoning token by token.

In short: knowledge graphs act as semantic compression for LLMs, reducing token load, eliminating ambiguity, and externalizing logic.

In a future post, I’ll connect this to the next step: how knowledge graphs don’t just ground generative AI but actively empower intelligent agents and agentic systems by providing memory, structure, constraints, and actionable context.

More to come.