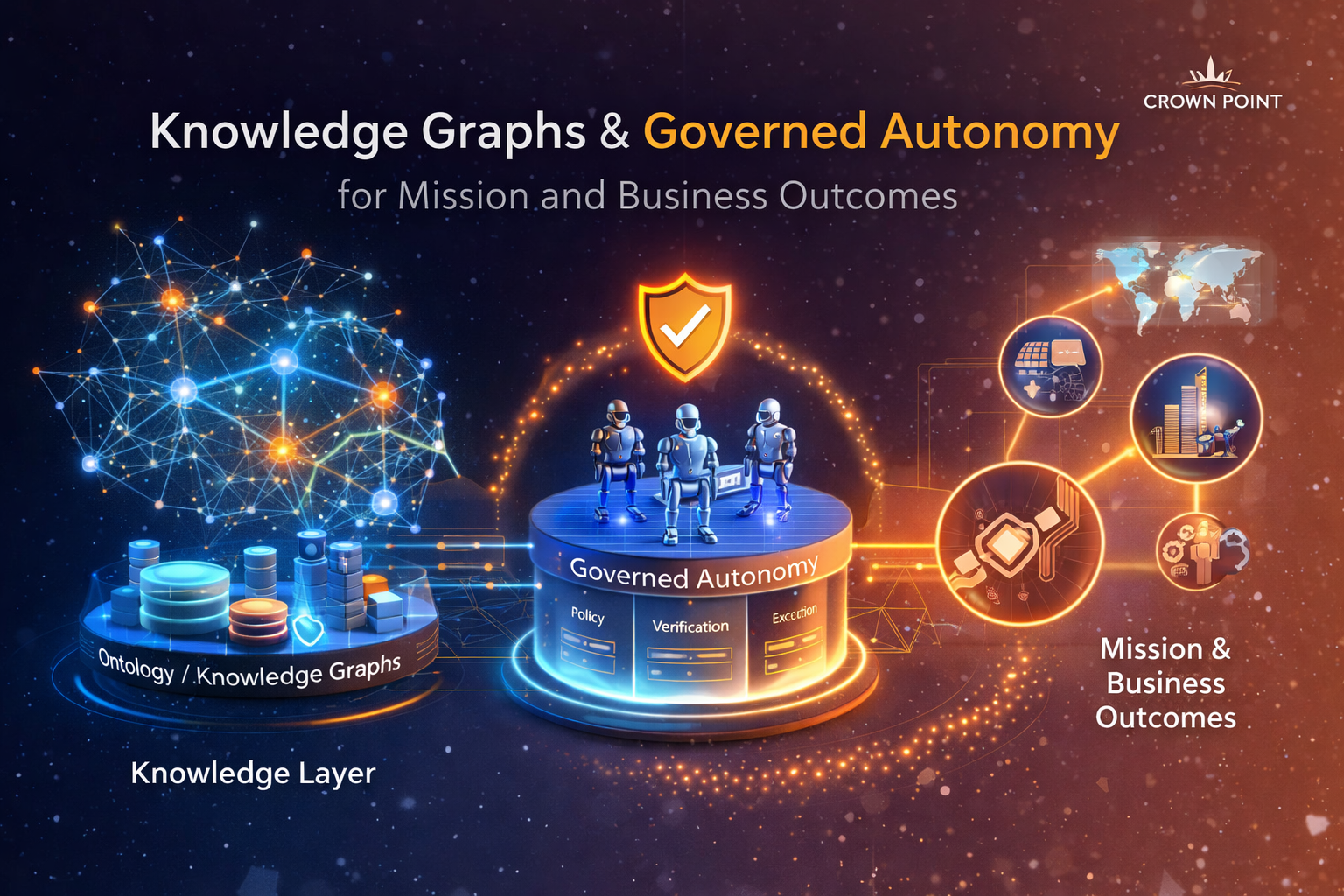

KCEF: Governed, Meaningful Autonomy for Mission and Business Outcomes

KCEF turns knowledge into governed autonomy: linking meaning, policy, and verification to outcome-driven execution.

The Knowledge-Centric Engineering Framework (KCEF) is a knowledge-governed approach to engineering and operations that helps organizations move from information to reliable, verifiable action. KCEF establishes a shared Knowledge (Meaning) Layer, built on ontologies and knowledge graphs, to normalize and harmonize heterogeneous data, services, and policies into actionable context. On top of that foundation, KCEF enables governed autonomy: agent-assisted orchestration and execution that can adapt as conditions change, while enforcing policy, preserving human control, and producing auditable traceability from intent to outcome.

Why KCEF Matters in the LLM Era: Reliability by Architecture, Not Optimism

Large language models (LLMs) are powerful accelerators for synthesis, interaction, and automation; but they also exhibit persistent limitations that do not disappear simply by scaling. These include hallucination risk, fragile long-context behavior, inconsistent reasoning under novelty, and dependence on retrieval pipelines that can drift, degrade, or be manipulated. In other words: LLMs are best treated as capabilities within a larger system, not as authoritative sources of truth.

KCEF aligns directly with this reality. It is a reliability architecture for AI-enabled operations: a way to convert information into governed, outcome-driven behavior while preserving human control and defensibility. Rather than trusting a model to “get it right,” KCEF makes the system designed to be right enough to act, and safe enough to stop when it isn’t.

Evidence is emerging that “LLM + environment” is not enough. Durable memory and reflective correction are required for dependable action. In Wen et al.’s DiLu framework for closed-loop decision-making, an out-of-the-box LLM performs poorly without accumulated experience; adding a structured memory of prior scenarios and reasoning, plus a reflection mechanism that detects unsafe decisions and revises them, significantly improves performance and generalization. The pattern is clear: durable, reusable context and explicit corrective feedback loops are what turn an LLM from a conversational engine into an operational decision component. KCEF generalizes this pattern beyond autonomy research into enterprise and mission operations: shared meaning + governed memory + verification + policy-enforced execution.

KCEF’s architectural response to LLM limits

1) Ground truth is externalized into a governed meaning layer

KCEF uses ontologies and knowledge graphs to normalize and harmonize heterogeneous sources into durable semantic structure that specifies (and encodes) meaning, relationships, constraints, and provenance. This reduces reliance on prompt stuffing and makes the system’s “memory” explicit, reusable, and auditable. When AI is invoked, it is grounded against a curated semantic layer rather than improvising from incomplete context.

2) Autonomy is governed, not assumed

KCEF enables increasing autonomy, but always within policy. The system is designed so that low-risk actions can proceed automatically when conditions are satisfied, while higher-impact actions route to designated authorities. This turns autonomy into a controllable operating mode that is guardrailed, measurable, and aligned to mission and business outcomes.

3) Reasoning is paired with verification and validation

KCEF treats model reasoning as one input in a broader decision flow. Policies, constraints, and validation steps are embedded so that outputs can be checked before they become commitments or actions. This is how KCEF reduces the operational impact of hallucinations and reasoning degradation: through systematic verification, not hoping the model is always right.

4) Retrieval is governed with provenance, access control, and audit

When retrieval is used, KCEF enforces trust controls: what sources are allowed, what can be disclosed, what must be cited, and what must be logged. This helps address retrieval fragility, e.g., drift, ranking noise, and contamination, by making evidence traceable and policy-controlled.

5) Execution is policy-enforced and auditable end-to-end

KCEF’s execution fabric provides the runtime guardrails that make AI operational: embedded policy enforcement, resilience, monitoring, and full provenance from “fact → rule → decision → action → outcome.” This is what makes AI-enabled execution defensible in complex, regulated, and mission-critical environments.

Key takeaway

KCEF does not promise perfect AI. It assumes fallibility and still enables dependable outcomes. By making meaning explicit, enforcing policy, inserting verification, and governing execution, KCEF turns AI from a conversational tool into an operational capability: governed autonomy that delivers mission and business outcomes with trust, traceability, and human accountability.